iSL Labs is building accessibility tools for People of Determination.

Early Access

Features

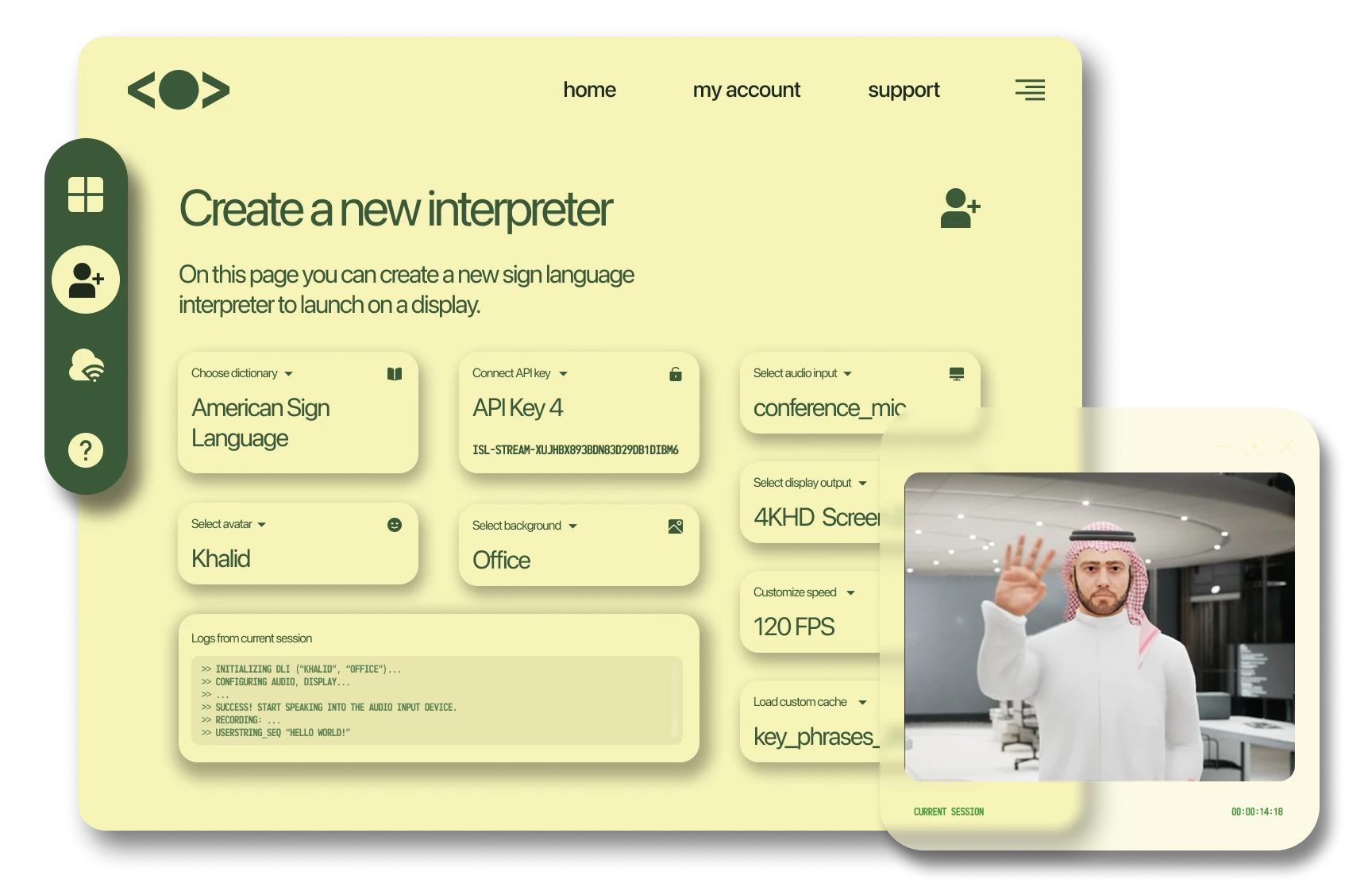

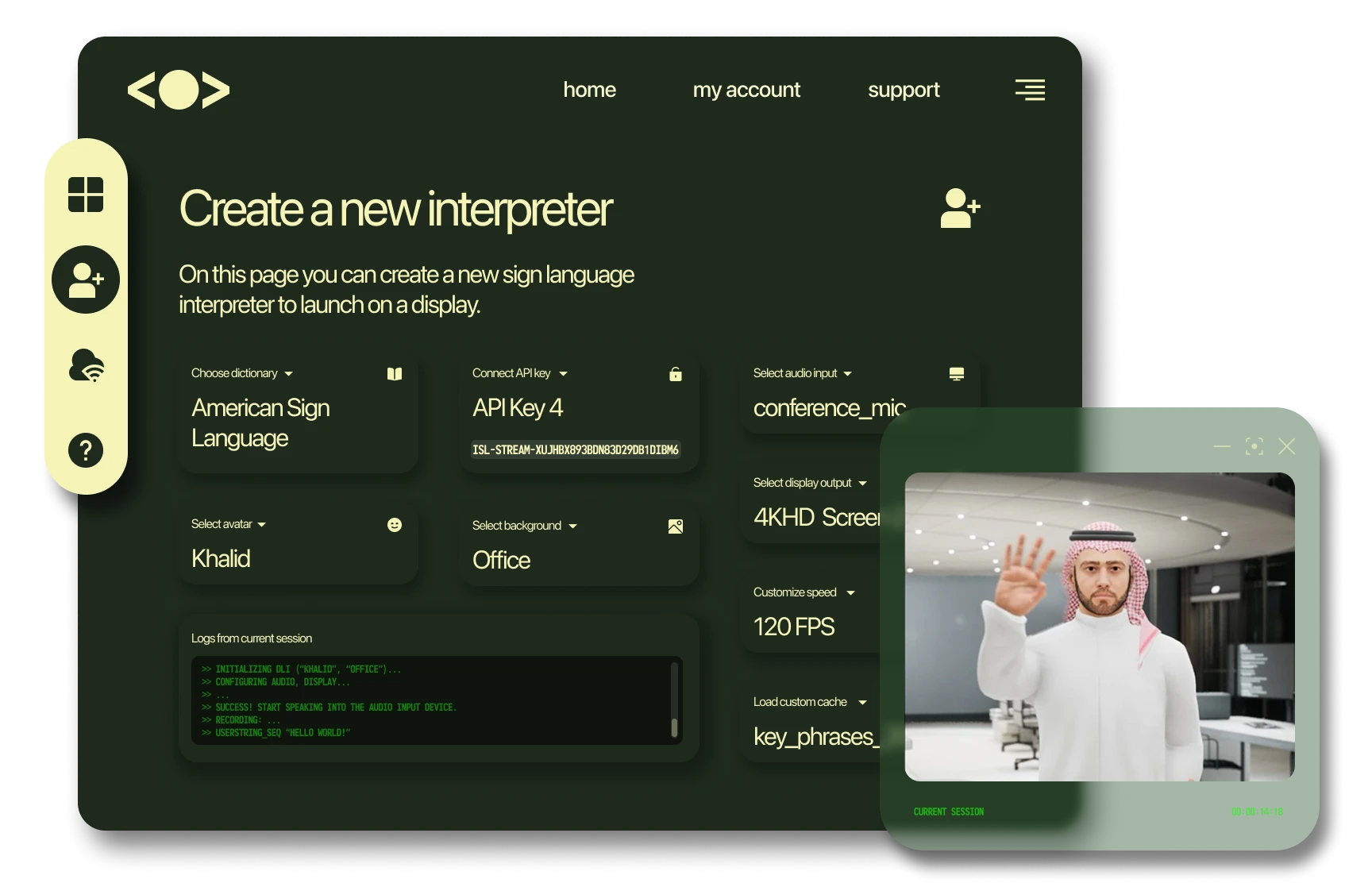

Speech to Sign Language

Our proprietary machine learning pipeline automatically generates a digital sign language interpreter to accompany spoken word.

Live Streaming

Our digital interpreter signs what you’re saying as you’re saying it, making speeches and events inclusive and effective.

Developers' API

Our AWS API allows developers to imbed a digital sign language interpreter within a web or mobile application

Process

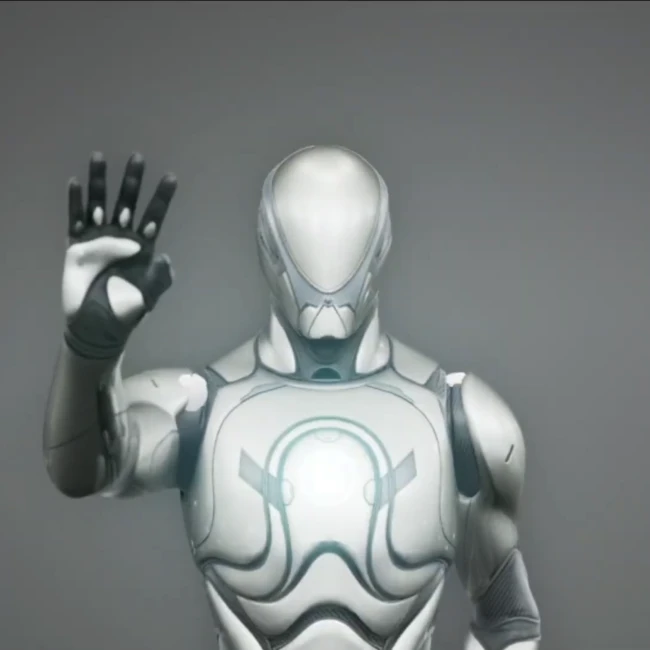

GMP Skeletal

UESkin_model

Production

Information should be Accessible.

Use Cases

Customer Software

Our model can be embedded within your customer facing apps, websites, or edge devices to enhance customer experience.

Events & Conferences

A digital sign language interpreter is perfect for accompanying speakers and presentations during public events and gatherings.

Government Projects

Ministries and government institutions can use our accessibility model in any of their digital or real-world PR.

About Us

iSL Labs is a small team of ML researchers and engineers based in the Dubai International Financial Centre Innovation Hub. We work closely with governments and institutions to build accessibility software that reaches a large audience.

AI has started to permeate and enhance our day to day lives. It does this by performing cognitive labor otherwise performed by humans - as such, most teams in the space focus on profit-driven product that streamline businesses or large operations.

Our goal in the space, however, is to deliver cutting edge technology that fundamentally improves quality of life for People of Determination. That’s why we started iSL Labs, to house research and development in AI-driven accessibility tools.